Entelligence AI Strategy

Independent Strategic Analysis

An independent, outside-in strategic analysis of Entelligence AI — a Mayfield-backed engineering intelligence platform building deep code understanding, AI-assisted code review, and organizational insight tooling. The analysis evaluates Entelligence's current state, environmental trends shaping AI-assisted software engineering, and proposes three multi-horizon strategic themes spanning developer flow, agent supervision, and organizational memory.

This is an independent strategic analysis of Entelligence AI, an early-stage engineering intelligence company building deep code understanding, AI-assisted code review, and organizational performance tooling for software teams.

Entelligence sits at a particularly interesting junction. The company recently closed a $5M seed round led by Mayfield and Correlation Ventures, has shipped a multi-surface product spanning IDE, GitHub, CLI, and dashboard, and operates in a category that is being actively reshaped by the rise of AI-assisted and agentic software development. The interesting strategic question is not whether the product is useful — early signals say it is — but where the company can most defensibly extend as the underlying market evolves.

This analysis was developed outside-in using publicly available information: product surfaces, founder content, launch posts, benchmarks, and category context. It is structured as a research-backed strategic assessment, not an internal roadmap.

Why This Analysis Exists

AI-assisted software development is entering mainstream adoption, but the infrastructure surrounding it — verification, organizational memory, governance — remains underdeveloped. As code generation becomes commoditized through Copilot, Cursor, and a growing field of agentic coding tools, the more durable strategic position is increasingly above the generation layer rather than inside it.

Entelligence has built credibly in that space. The product narrative emphasizes context, comprehension, and organizational visibility rather than autocomplete or code completion. The question this analysis tries to answer is what that positioning could grow into, and where the strongest extensions are given the current product, market, and timing.

Three questions structured the work:

- Where does Entelligence have a defensible right to win as the AI-assisted engineering category matures?

- Which environmental trends create the most leverage for Entelligence's existing capabilities?

- What strategic themes would credibly extend the product from a high-value developer tool into a systemic engineering intelligence layer?

Current State

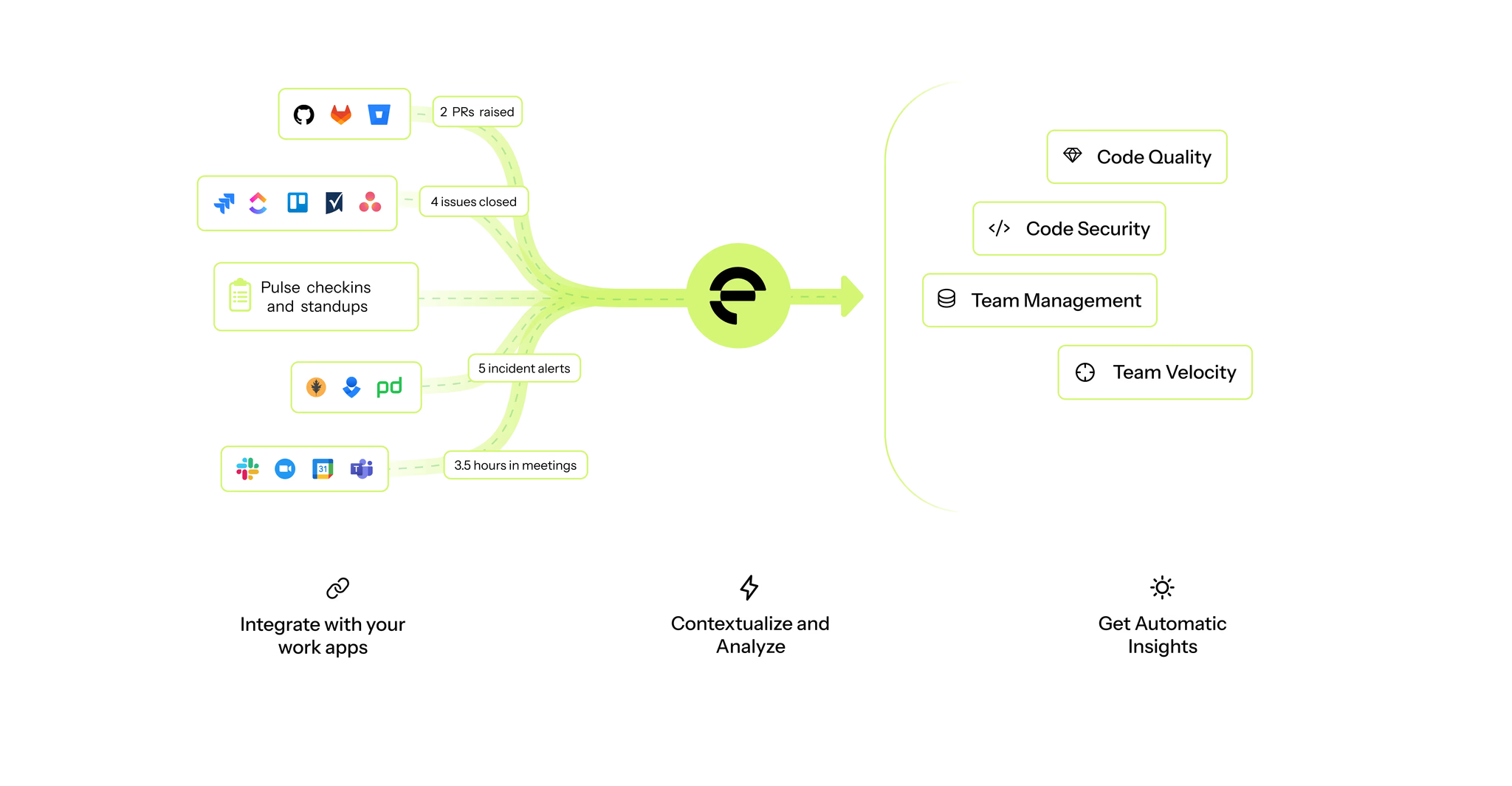

Entelligence ships a suite of engineering intelligence capabilities focused on code understanding, review quality, documentation accuracy, and organizational visibility. The core experience centers on a contextual code engine that analyzes repositories, pull requests, incidents, metrics, and planning artifacts to surface insights and suggested improvements. Key surfaces include Deep Review for pull requests, Ask Ellie for multi-tool engineering queries, automated documentation generation and updates, and team-level performance dashboards. The product reaches users across IDEs, GitHub check flows, terminals, and web interfaces.

Primary users today are engineering teams. Individual developers use Entelligence during day-to-day workflows to understand codebases, reason about changes, receive contextual review suggestions, and onboard faster. Tech leads, managers, and platform engineering teams use the product to gain visibility into review health, performance patterns, ownership, documentation gaps, and planning friction. Early traction signals point to usage in fast-scaling software organizations with high development velocity and complexity.

Differentiators

Several differentiators stand out relative to adjacent tools in the developer productivity and code review ecosystem.

Contextual depth. Entelligence goes beyond autocomplete and local code suggestions to understand repository history, ownership, dependency graphs, incidents, and metrics. This provides a richer substrate than tools that operate only within the IDE or within a single pull request.

Multi-surface operation. The product meets users across IDE extensions, CLI tooling, GitHub checks, dashboards, and Ask Ellie chat. This reduces context switching and supports both proactive and reactive workflows.

Documentation and knowledge integrity. Automated documentation generation and updates, combined with repository-level intelligence, address knowledge decay and onboarding speed. This helps reduce institutional knowledge loss and aligns with a core need in modern distributed engineering teams.

Engineering insights for leadership. Entelligence surfaces information that matters to tech leads and engineering managers — review bottlenecks, ownership patterns, documentation gaps, quality signals. This bridges the gap between developer tools and management tools, a space that many competitors do not fully address.

Positioning toward complex systems. The product narrative is aligned with teams that have significant code complexity, multiple integration points, and coordination overhead. This is a defensible segment relative to simpler developer assistants and autocomplete tools.

Market and Category Context

Entelligence sits at the intersection of several fast-evolving markets: AI-assisted development, source code intelligence, developer onboarding and documentation, code review augmentation, engineering performance and visibility, and emerging agentic programming workflows.

Using a simplified 3C lens:

- Customers increasingly seek tools that reduce onboarding time, improve review quality, and maintain documentation without manual effort. Distributed teams, rapid product cycles, and talent churn all increase the need for contextual intelligence.

- Competitors include Copilot and Cursor in the IDE and code suggestion space, Sourcegraph and Polaris for code intelligence, LinearB and Jellyfish in the insights space, and Sentry or Datadog for incident context. These tools largely remain siloed. Entelligence differentiates by connecting code with organizational context across incidents, planning, and documentation.

- Company positioning is as an engineering intelligence platform for complex systems, not as an autocomplete tool or an analytics dashboard. This stance aligns with emerging trends in agentic programming, where maintaining correctness and institutional memory becomes critical.

From a chasm and adoption-curve perspective, Entelligence appears to be gaining traction with early adopters: AI-forward startups, research-oriented engineering groups, and teams comfortable with modern tooling. Broader adoption among mid-market and enterprise engineering organizations will likely require integration maturity, procurement support, and clearer communication of value relative to existing tools.

Risks and Constraints

Several risks are visible from the outside. These are observations, not recommendations.

Category clarity. Because Entelligence operates at the intersection of code review, analytics, documentation, and AI assistance, there is a natural risk of category ambiguity. Buyers used to budgeting separately for IDE assistants, analytics tools, and documentation platforms may initially struggle to decide which mental box Entelligence belongs in.

Onboarding and surface complexity. The product intentionally meets users in many places — IDEs, pull request workflows, dashboards, Ask Ellie chat, and CLI tooling. Powerful, but it can make it harder for new teams to understand where to start and which flows to standardize on.

Integration and environment dependencies. Entelligence's value increases as it connects to more of a customer's stack — GitHub, CI, planning, incident tooling. This creates natural dependencies on the customer's environment and security posture, which can introduce extra evaluation steps in larger organizations.

Trust, privacy, and cultural perceptions. Any product that analyzes private code and aggregates engineering signals must navigate security, privacy, and team culture expectations. Different companies have different comfort levels around performance insights, automated review comments, and AI involvement in workflow.

Market timing and competitive noise. The rise of AI-assisted coding and early agentic workflows supports Entelligence's long-term vision, but the pace at which mainstream teams adopt these patterns is still uncertain. Many tools are also adding AI features simultaneously, which makes the market feel noisy.

These challenges are consistent with early category formation rather than structural weaknesses. The current product footprint, strengths, and positioning indicate that Entelligence has established a differentiated presence in the emerging engineering intelligence space, with credible use cases and strong alignment with developer and leadership pain points.

Environmental Trends

A handful of macro and technology trends create the context for the strategic themes that follow.

AI-assisted software development is entering mainstream adoption. Developers increasingly expect intelligent tooling as a default part of their workflow rather than an optional add-on. This raises the floor of expected capability and rewards products that go deeper than completion.

Agentic programming is emerging as a direction for AI-powered engineering. Agents that write or modify code introduce new needs around control, safety, review, and context. Most existing review and verification infrastructure was not designed for this.

Developer workflow fragmentation continues to increase. Teams jump between IDEs, Git platforms, CI systems, incident tooling, planning tools, and internal documentation, creating information silos that a unifying layer can address.

LLM capabilities are improving while costs trend downward. Deeper contextual analysis becomes commercially viable, allowing for richer understanding of repositories, planning artifacts, and incidents without prohibitive infrastructure costs.

Model Context Protocol and similar frameworks are enabling tool-mediated agent workflows. Agents will increasingly need contextual checks, review gates, documentation updates, and safety boundaries before merging code into production.

Code intelligence is moving beyond static analysis into semantic and behavioral understanding. Product differentiation is shifting toward systems that understand intent, invariants, dependencies, and risk rather than only syntax or linting-level correctness.

Organizational memory problems are becoming more visible. Repositories accumulate decisions, ownership patterns, tribal knowledge, incident history, and architecture context that are not captured in formal documentation. As codebases and teams grow, this gap compounds.

These trends create a favorable environment for an engineering intelligence platform that unifies context across code, incidents, documentation, and agentic workflows. They explain why the strategic themes below are well-timed rather than speculative.

Three Strategic Themes

Three themes emerged from the analysis. They are not independent feature concepts — they represent a coherent progression along the natural evolution of AI-assisted software engineering.

Theme 1: Proactive Code Understanding Inside the IDE

Help developers understand codebases faster by surfacing contextual summaries directly in the IDE before they need to ask.

Context. New and returning developers often lack immediate context on files, modules, ownership, and intent, which leads to slower onboarding and increased dependency on senior engineers. The people who feel this pain most acutely are new hires, rotation engineers, and developers touching unfamiliar parts of the codebase. The problem is magnified today because teams are distributed, codebases are large, and developer velocity expectations are higher than ever.

Insight. From a JTBD perspective, developers do not simply want help writing code — they want help understanding the environment in which code lives. Today's generative coding tools are reactive and autocomplete-focused, addressing syntax and local intent but not deeper code comprehension. Using Whole Product logic, code understanding sits before code generation in the value chain, and therefore unlocks downstream value across onboarding, review quality, and maintainability. Early adopters of AI-assisted development are already using IDE helpers, which places this theme in chasm-crossing territory rather than speculative future behavior.

Recommendation. Position Entelligence as a proactive comprehension assistant by adding contextual HUD-style summaries in the IDE that explain purpose, behavior, ownership, dependencies, and risk before the developer needs to ask. This reinforces Entelligence as a thinking layer rather than a typing layer.

Initiatives. File- and module-level summaries surfaced on open. Ownership, invariants, and dependency information shown contextually. Quick links into Ask Ellie for deeper reasoning.

Value created. Developers reduce onboarding and exploration time, senior engineers reduce interruption time, and teams increase review quality by working from shared context. This strengthens Entelligence's position against autocomplete-centric tools and improves stickiness through daily workflow value.

Theme 2: Entelligence as the Supervisor for Agentic Programming

Enable safe adoption of AI code agents by acting as the verification and review layer that ensures correctness, documentation, and alignment with team practices.

Context. Agentic programming is emerging, where autonomous or semi-autonomous agents generate code changes across repositories and services. The people who feel the associated risks are developers who need to trust the output, platform teams who manage CI/CD, and engineering managers accountable for quality and compliance. The moment matters because agent frameworks, MCP-based ecosystems, and LLM-powered tools are accelerating, while controls and verification standards have not matured at the same rate.

Insight. Using the Whole Product model, code generation only becomes viable in production when complemented by validation, documentation, guardrails, and compliance. From an RPV (Resources, Processes, Values) perspective, Entelligence already has contextual analysis, documentation synthesis, and cross-tool reasoning that can evolve into supervisory processes rather than generation processes. This positions Entelligence in the governance and safety layer of the AI software stack — a high-value wedge during category formation, and one that aligns with the chasm model where conservative buyers demand safety before automation.

Recommendation. Position Entelligence as the agent supervision layer that verifies agent-generated code for correctness, invariants, documentation updates, and scope adherence before merges or deployment. The focus is not competing with agents, but making agents safe for real teams and real systems.

Initiatives. Agent output validation gates in PR workflows. Automatic documentation and rationale generation for agent changes. Standards for correctness, invariants, and service constraints.

Value created. Developers gain trust in AI output, platform teams gain safety and compliance, and leadership gains confidence that automation will not degrade quality. This creates a moat by making Entelligence part of the control plane for autonomous engineering, rather than a feature competing in code completion.

Theme 3: Build the MemoryOS for Engineering Organizations

Create a machine-consumable knowledge graph that captures decisions, dependencies, ownership, incidents, and documentation to form persistent organizational memory.

Context. Engineering organizations lose knowledge through employee turnover, changing priorities, system rewrites, and fragmented tools. The people who feel this most are new hires, devops, tech leads, architects, and managers who need to understand why systems behave a certain way and why past decisions were made. The timing is critical because autonomous agents, distributed teams, and complex systems all depend on intact contextual memory to operate effectively.

Insight. Using Category Design logic, "engineering memory" is an unoccupied category that sits above documentation tools and below code execution, creating a new system layer. From a TAM perspective, MemoryOS expands the buyer from individual developers to platform teams and leadership. Using RPV, Entelligence already has the resource base for this through multi-source ingestion, code understanding, documentation synthesis, and organizational insight. The MemoryOS becomes the substrate for both human reasoning and agentic reasoning, enabling long-run defensibility.

Recommendation. Evolve Entelligence into a MemoryOS that constructs and exposes a persistent knowledge graph of code, decisions, incidents, ownership, and invariants — enabling both humans and agents to reason about and evolve complex systems safely. The emphasis is on structure, persistence, and machine interface, not one-off insights.

Initiatives. Linking code changes to incidents, ownership, and documentation. APIs for agents to query organizational memory. Query surfaces for leadership and platform engineering.

Value created. Developers onboard faster, leadership makes better decisions, and organizations retain knowledge beyond individuals. This creates a strong data moat, a platform position, and a durable strategic advantage because organizational memory accumulates and compounds over time and cannot be easily replicated by competitors.

Why These Themes Cohere

The three themes are a coherent multi-horizon progression rather than a list of unrelated ideas.

Theme 1 improves developer understanding within existing workflows, creating immediate daily utility for individual contributors. Theme 2 enables safe agentic workflows by introducing structure, validation, and trust, which becomes important as more teams adopt autonomous or semi-autonomous code generation. Theme 3 builds toward a MemoryOS that encodes organizational knowledge in a persistent, machine-consumable way, which becomes critical as systems, teams, and agents depend on shared context.

Mapped to a multi-horizon strategy:

- Horizon One focuses on developer value today by improving comprehension and reducing onboarding friction.

- Horizon Two focuses on AI safety and supervision as agentic programming becomes more common and organizations need assurance before automation touches production systems.

- Horizon Three focuses on the MemoryOS platform as a long-term organizational layer that creates durable data moats and expands the buyer audience beyond individual developers to platform engineering and leadership.

The near-term work strengthens product usage, retention, and developer sentiment. The medium-term work positions Entelligence at the control layer of AI-driven code generation. The long-term work establishes a platform that accumulates value over time and cannot be easily replicated by competitors.

Timing Against Public Cadence

Entelligence's public activity over recent quarters indicates a strong execution rhythm across product launches, refinement cycles, and community engagement. The quarterly read below is based on public signals (launch posts, founder content, ecosystem activity, and benchmarks) — not internal information.

- Q1. Ask Ellie launch and early rollout. Customer feedback loops through direct engagement. Free-access signups and onboarding momentum. Launch events and community activation.

- Q2. Iteration on Deep Review, Docs updates, and Ask Ellie UX. Founder-led narrative and content shaping. Internal dogfooding and performance improvements. Expansion of documentation auto-update and review quality.

- Q3. Benchmarking and evaluation work (AI code review evaluation framework). Increased thought leadership and developer education. Ecosystem refinement through IDE, CLI, and GitHub surfaces.

- Q4. Continued refinement of core surfaces. Broader developer advocacy and visibility. Preparation for deeper adoption among mid-market teams. Stronger external credibility through benchmarks and usage stories.

The cadence shows a company shipping frequently, learning quickly, and refining narrative and product quality in parallel. That rhythm is compatible with layered strategic themes that scale with existing surfaces rather than introducing new ones prematurely.

Mapped against the three themes:

- Theme 1 (Proactive Code Understanding) fits naturally into Q2–Q3, where public signals show focus on UX refinement, IDE surfaces, and onboarding improvements.

- Theme 2 (Agent Supervision) aligns with Q3–Q4 as agent frameworks mature and benchmarking becomes more visible. Complements existing review and verification surfaces.

- Theme 3 (MemoryOS) maps to a longer arc beginning late Q4 and extending into following cycles. Matches category-evolution pace, broadens the buyer persona, and compounds value over time without disrupting near-term execution.

Strategic Takeaways

A few takeaways extend beyond Entelligence specifically.

Position above the generation layer when generation is commoditizing. When a category is defined by a fast-improving primitive (code generation in this case), the more durable strategic position is usually the layer above it — context, verification, governance — rather than competing inside the primitive. Generation rents value from the model. The supervisory layer accrues value from the workflow.

Multi-surface presence is a feature, not a flaw, when context is the product. Many devtools simplify by picking a single surface (IDE, dashboard, CLI). For products whose value is shared context, multi-surface presence is the differentiator. The cost is onboarding complexity, which is a UX problem, not a strategic one.

Organizational memory is an unbuilt category. Documentation tools, code search, and analytics dashboards each address pieces of it, but no one currently provides a persistent, machine-consumable substrate of decisions, incidents, ownership, and invariants. As autonomous agents enter engineering workflows, the value of that substrate compounds.

Multi-horizon framing matters when categories are forming. A single-horizon strategy locks a company into either today's product or tomorrow's vision. A multi-horizon framing — daily developer value now, agent safety in the medium term, organizational memory long term — makes the strategy both useful in the present and defensible as the market evolves.

Viewed through those takeaways, this analysis is less about Entelligence specifically and more about how the AI-assisted engineering category is likely to mature: generation commoditizes, context wins, and the products that endure are the ones that sit above the generation layer with durable claims on developer trust and organizational memory.